Knowledge, Intent, and Evaluation: How Dynamics 365 Is Becoming Agentic

For the last few years, Copilot has defined Microsoft’s AI story in Business Applications. However, the introduction of Dynamics 365 autonomous service agents is here to change this. Earlier AI sat alongside users and was suggesting replies, summarizing records, and assisting with tasks. Valuable, but fundamentally assistive and only reacting to users input.

In Dynamics 365 Customer Service and Contact Center, Microsoft is now making a more structural move. The platform is shipping first‑party autonomous service agents that take on defined responsibilities inside the system itself: discovering customer intent, generating and maintaining knowledge, and evaluating service quality at scale.

Individually, these agents are useful. Together, they change what Dynamics 365 is and how it is used.

CRM now emerges as an agentic system: one where intent, knowledge, and quality form a closed feedback loop that allows service operations to learn from outcomes, not just react to inputs.

Understanding Dynamics 365 Autonomous Service Agents

It’s worth clearly separating two things that often get blurred:

- Custom agents built in Copilot Studio

Flexible, orchestration-heavy, ideal for cross-system workflows and bespoke logic. - First‑party autonomous service agents built into Dynamics 365

Opinionated, platform-native capabilities embedded directly into Customer Service and Contact Center.

Microsoft groups these built-in capabilities under autonomous service agents, including the Customer Intent Agent, Customer Knowledge Management Agent, and Quality Evaluation Agent.

The architectural implication is significant: Microsoft is now standardizing “platform-grade intelligence” (intent discovery, knowledge creation, and quality oversight) so organizations don’t need to reinvent these foundations in every bot, flow, or channel.

This sets the stage for scale.

From Sentiment to Conformance: Why the Evaluation Agent Is the Keystone

Traditional CRM intelligence tends to stop at descriptive signals such as

- “Customer sentiment was negative.”

- “Average handling time increased by 12%.”

- “This sampled call received a low QA score.”

This is helpful but not operationally decisive.

The Quality Evaluation Agent introduces a different class of insight: conformance.

Rather than asking how did this interaction feel?, it asks did it meet defined standards? No feelings involved, just hard cold facts

Old world (telemetry and sentiment):

- “The customer sounded frustrated.”

- “This agent’s NPS was below average.”

- “AHT spiked this week.”

Agentic world (conformance):

- “The required verification step was missed.”

- “The interaction deviated from the compliance script at a specific point.”

- “The resolution lacked the expected knowledge reference for this issue type.”

This distinction matters because automation without correctness is risk.

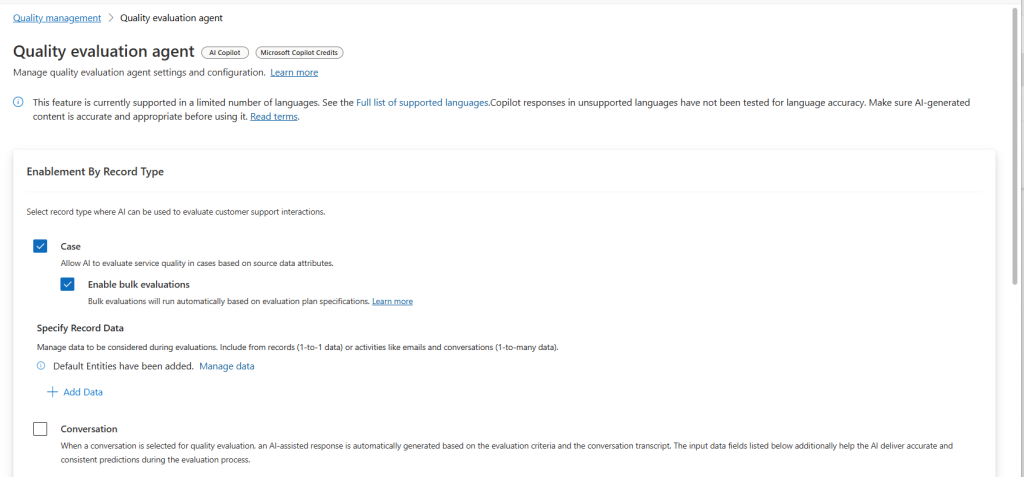

Microsoft’s evaluation model allows supervisors to define:

- evaluation criteria (what “good” looks like),

- evaluation plans (when and how interactions are assessed),

- and standardized outputs (scores, summaries, and recommended actions).

If you’re thinking long-term, this agent is not only nice to have but foundational. Conformance becomes the governance layer that makes everything else such as knowledge generation, intent reuse, and eventually autonomy safe to scale.

Measurement comes before action. This is an easy agent to turn on as it does not edit information in CRM and does not send anything externaly, a great place to start in other words!

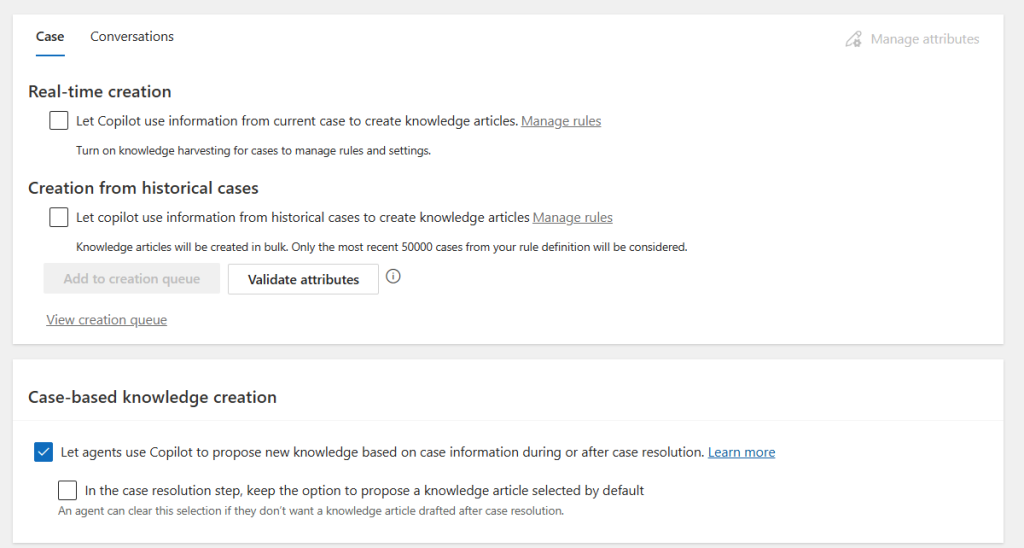

The Knowledge Management Agent: Turning Resolution into Institutional Memory

Knowledge has always been a service bottleneck. It’s expensive to author, difficult to maintain, and frequently out of sync with how issues actually appear in real customer interactions.

The Customer Knowledge Management Agent directly targets that failure mode.

Rather than relying on manual authoring, it autonomously turns:

- resolved cases,

- conversations,

- emails,

- and notes

into knowledge articles which can be done either in real time as work is completed, or retrospectively from historical data. So here you can start of using all your relevant historical data and to create knowledge and than continue to update it in real time.

If you care about Copilot quality, you care about knowledge quality. This agent focuses on the hardest part of knowledge management: keeping the knowledge base fresh, relevant, and grounded in actual resolution work.

The Intent Agent: Making Intent a Platform Capability

Historically, intent has been treated as a chatbot artifact: a list of intents, an NLU model, and a set of hard-coded branches. That approach breaks down quickly in real service organizations.

Intent changes. Channels fragment. Exceptions multiply.

The Customer Intent Agent takes a different approach. It uses generative AI to analyze past interactions and continuously discover and refine an intent library that the platform itself understands.

This shows up in two ways:

- Self-service

Intent discovery informs follow-up questions, extracts intent attributes (like product or error code), and guides knowledge retrieval dynamically. - Assisted service

Intent detection updates continuously during live conversations, surfacing suggested questions and solutions to reduce handling time and improve consistency.

Architecturally, this is the shift:

Intent stops being something “the bot knows” and becomes something “the platform knows.”

Once intent is shared at the platform level, it can influence routing, knowledge selection, analytics, and automation across Customer Engagement and not just inside a single conversational flow.

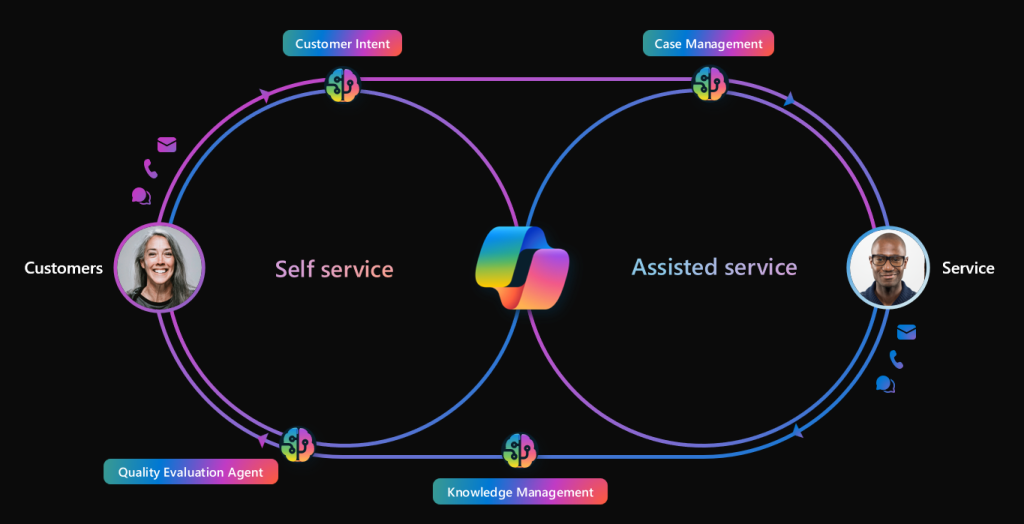

Closing the Loop: Intent → Knowledge → Evaluation → Improvement

This is where the system becomes agentic and not only CRM with AI added on top.

Individually, these agents add value. Together, they form a closed feedback loop:

- Interactions (cases, chats, emails) generate signals

- Customer Intent Agent discovers and refines intent patterns

- Knowledge Management Agent creates and updates knowledge from real resolutions

- Execution happens across self-service and assisted channels

- Quality Evaluation Agent scores outcomes against defined standards

- Insights feed back to improve intent definitions, knowledge quality, and service standards

The system doesn’t just respond but instead it learns from the results of its own operation.

This is what “agentic” means in practice.

And critically, conformance measurement sits at the center, ensuring that learning and automation remain aligned with business and compliance expectations.

How to Deploy Dynamics 365 Autonomous Service Agents

This isn’t an official Microsoft framework, but it maps well to how these first‑party agents are designed to be used.

- Observe

Enable intent discovery and quality evaluation first. Establish a baseline: what intents exist, how knowledge performs, and where quality breaks. - Suggest

Use intent-driven questions and recommended solutions to guide agents and self-service, without handing over control. - Shadow

Run the system “as if autonomous,” but keep humans executing. Compare what the agent would have done vs what actually happened using evaluation outputs. - Assist

Allow agents to execute bounded, low-risk tasks (drafting, pre-filling, packaging). Knowledge article generation is a classic example. - Guarded Autonomy

Only after outcomes are stable and conformance is measurable do you allow action within strict constraints, with auditability and rollback.

The sequencing matters: First controll and then autonomy.

The Real Architectural Risk: Avoiding «Legacy AI»

If the feedback loop of intent, knowledge, and evaluation is the gold standard, ignoring it creates a significant technical debt. While it’s tempting to stick to what we know, there is a looming risk: building Legacy AI.

You can absolutely ship value today using traditional scripted Omnichannel flows or heavily customized Copilot Studio topics. However, the risk is what those designs become over time. Without the backbone of Dynamics 365 autonomous service agents, scripted logic trees become brittle:

- Intents drift: User behavior changes, but your hard-coded triggers don’t.

- Exceptions grow: You end up with a «spaghetti» of conditions to handle edge cases.

- Maintenance becomes permanent overhead: You spend more time fixing the bot than improving the service.

As these first‑party agents mature, legacy designs become increasingly hard to migrate. This is because truly agentic systems assume a foundation that manual scripts lack: shared intent libraries, continuously evolving knowledge, and a governance layer based on conformance rather than just «if/then» logic.

The bottom line: Legacy AI is any automation that cannot participate in a feedback loop. It’s a dead end in a world moving toward self-learning systems.

Finding the Balance: When First‑Party Agents Aren’t Enough

Does this mean we should abandon custom-built solutions entirely? Not at all. The goal isn’t to replace customization, but to stop wasting custom effort on «platform-grade» problems.

While Dynamics 365 autonomous service agents handle the heavy lifting of intent and knowledge, Copilot Studio remains the primary tool for high-value differentiation:

- Cross-system orchestration: Triggering workflows in external ERPs or legacy databases.

- Bespoke process logic: Handling complex, proprietary business rules that are unique to your brand.

- Domain-specific actions: Creating deep integrations that first-party agents don’t cover out-of-the-box.

The durable pattern is a hybrid approach: Use the first‑party agents as your «foundation» for intent and knowledge, then extend them with custom agents where your business needs to stand out. This ensures your governance remains anchored in measurable outcomes while your functionality stays flexible.

What to Do With This

Dynamics 365 is moving toward agentic operation and it is not as a slogan. The platform now includes the required components: shared intent, autonomous knowledge creation, and scalable quality evaluation to actually make it work

For architects, the takeaway is practical:

- Treat conformance as the center of gravity. Define quality, measure it, iterate.

- Treat intent as a platform capability, not a chatbot implementation detail.

- Treat knowledge as a living system, generated from real resolution work.

That combination is what turns isolated AI features into an agentic service platform.

Further Reading

This article is meant to give the overall functionalities of the first party agents, more detailed articles about each of them see the following posts:

Knowledge Agent: https://sjoholt.com/the-knowledge-management-agent-turning-resolution-into-institutional-memory/

Intent Agent:

Evaluation Agent: https://sjoholt.com/from-sentiment-to-conformance-why-the-evaluation-agent-is-the-keystone/